Dominik Kos, Kristina Skufca

DATA ENGINEERS

Previous blog posts from Data Catalog series:

Data Catalog and Testing Open Source Data Catalogs

Introduction

This is the third part of a multi-part series where we are discussing the topics surrounding Data Catalogs. Following our previous post, we will now be covering the testing of the OpenMetadata across the same parameters covered for Amundsen.

OpenMetadata

Introduction

OpenMetadata is an open-source project that is driving Open Metadata standards for data. It is an open standard with a centralized metadata store and ingestion framework, supporting connectors for a wide range of services. The metadata ingestion framework enables you to customize or add support for any service. REST APIs enable you to integrate OpenMetadata with existing toolchains. Using the OpenMetadata user interface (UI), data consumers can discover the right data to use in decision making, and data producers can assess usage and consumer experience in order to plan improvements and prioritize bug fixes.

OpenMetadata enables metadata management end-to-end, giving you the ability to unlock the value of data assets in the common use cases of data discovery and governance, but also in emerging use cases related to data quality, observability, and people collaboration.

Components

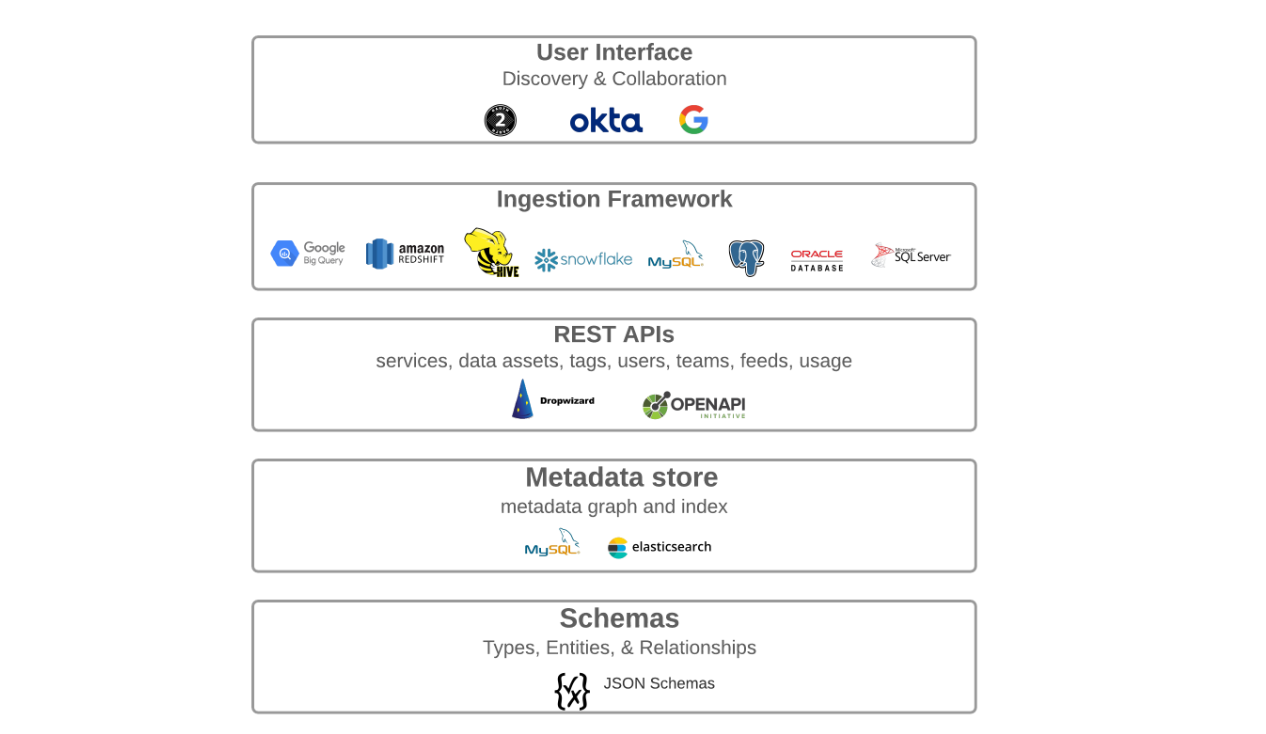

Unlike Amundsen, OpenMetadata is more like a black box. It’s internal system has five main components which work like one whole, so in retrospect it should be easier to set up and use. The key components of OpenMetadata include the following:

- User Interface (UI) – a central place for users to discover and collaborate on all data.

- Ingestion framework – a pluggable framework for integrating tools and ingesting metadata to the metadata store. The ingestion framework already supports well-known data warehouses. See the Connectors section for a complete list and documentation about supported services.

- Metadata APIs – for producing and consuming metadata built on schemas for User Interfaces and for Integrating tools, systems, and services.

- Metadata store – stores a metadata graph that connects data assets and user and tool generated metadata.

- Metadata schemas – defines core abstractions and vocabulary for metadata with schemas for Types, Entities, and Relationships between entities. This is the foundation of the Open Metadata Standard. See the Schema Concepts section on the official OpenMetadata website to learn more about metadata schemas.

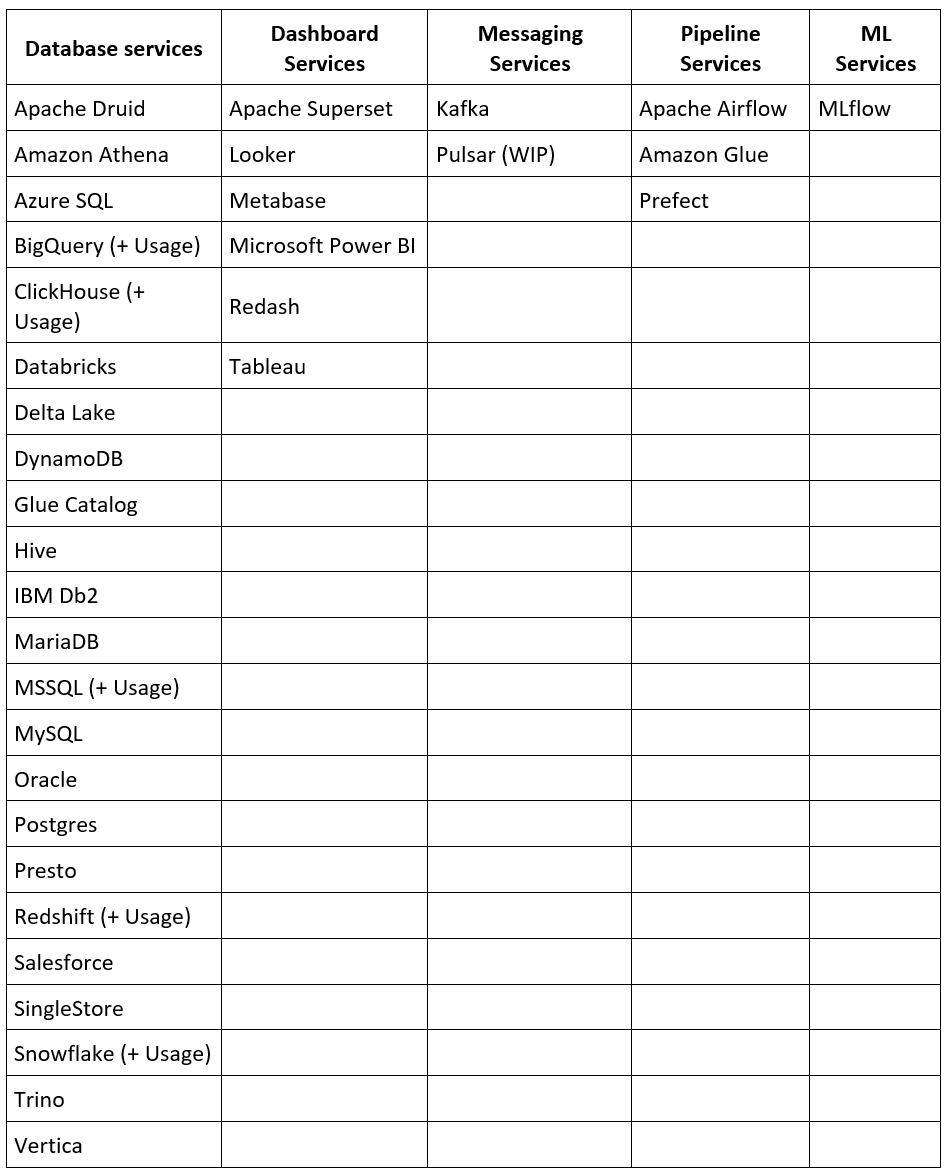

Connectors

OpenMetadata provides connectors that enable you to perform metadata ingestion from a number of common databases, dashboards, messaging, and pipeline services. With each release, there are additional connectors added and the ingestion framework provides a structured and straightforward method for creating your own connectors. See the table below for a list of supported connectors:

In the case of OpenMetadata, the following two connectors were tested: BigQuery and Kafka.

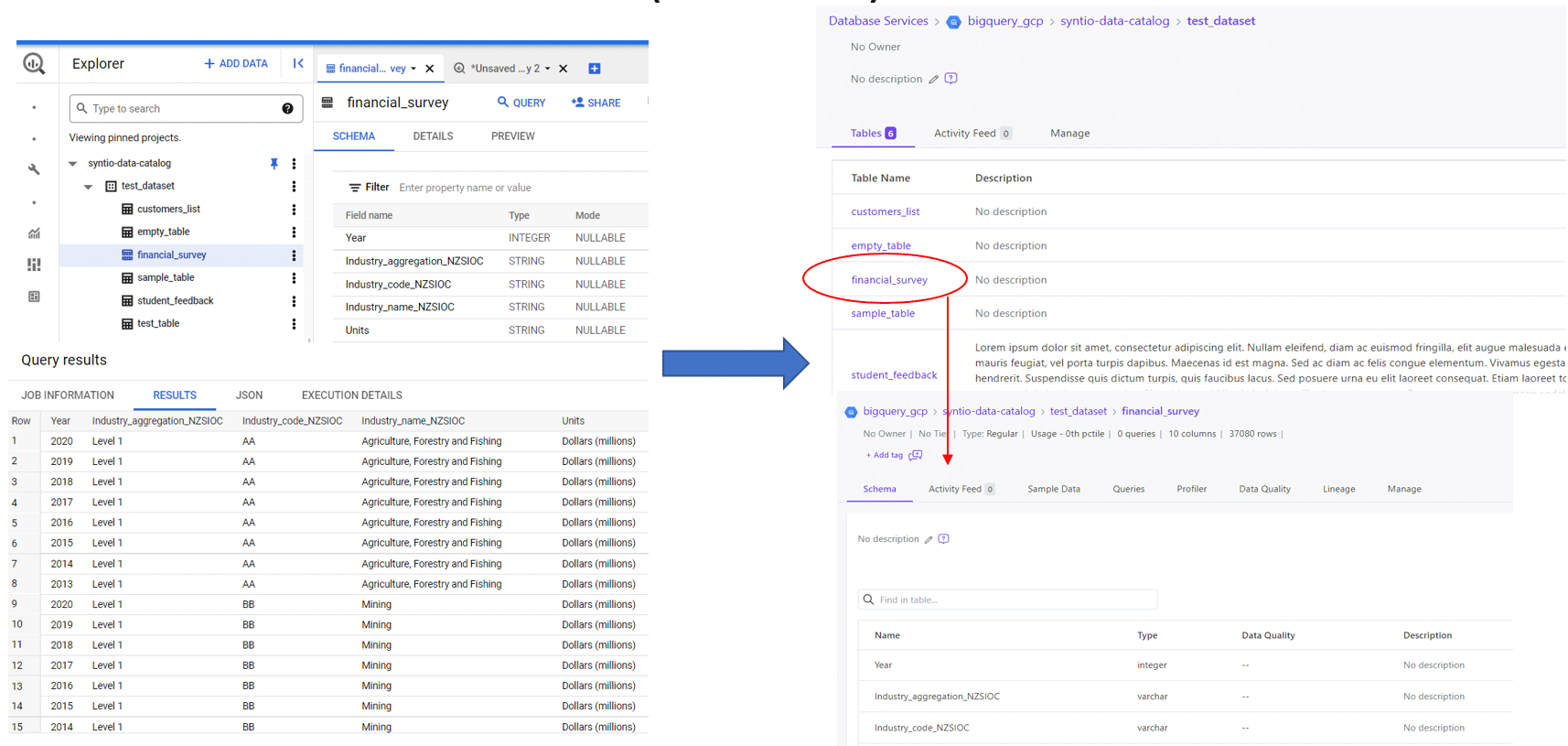

BigQuery

BigQuery already came as an out-of-the-box connector with OpenMetadata. Along with the metadata and ingestion, came data usage and data profiler as separate scripts to be enabled. In order to test it all out the only thing that needed to be provided additionally was the Google Application Credentials.

Kafka

Connector for Kafka came as a semi-existing one for OpenMetadata with only a metadata ingestion script. To be able to test it out, we needed to provide Kafka Brokers and Schema Registry. More in-depth testing of this connector was left for in the future.

Business Glossary

A glossary in OpenMetadata is a controlled vocabulary that helps to establish consistent meaning for terms, establish a common understanding, and to build a knowledge base. Glossary terms can also help to organize or discover data entities. OpenMetadata models a Glossary that organizes terms with hierarchical, equivalent, and associative relationships.

Glossaries are a collection of hierarchy of Glossary Terms that belong to a domain.

- A glossary term is specified with a preferred term for a concept or a terminology (example — Customer).

- A glossary term must have a unique and clear definition to establish consistent usage and understanding of the term.

- A term can include Synonyms, other terms used for the same concept (example — Client, Shopper, Purchaser, etc.).

- A term can have children terms that further specialize a term. Example, a glossary term Customer, can have children terms — Loyal Customer, New Customer, Online Customer, etc.

- A term can also have Related Terms to capture related concepts. For Customer, related terms could be Customer LTV (LifeTime Value), Customer Acquisition Cost, etc.

A glossary term lists Assets through which you can discover all the data assets related to the term. Each term has a life cycle status (e.g., Draft, Active, Deprecated, and Deleted). A term also has a set of Reviewers who review and accept the changes to the Glossary for Governance.

The terms from the glossary can be used for labeling or tagging as additional metadata of data assets for describing and categorizing things. Glossaries are important for data discovery, retrieval, and exploration through conceptual terms and help in data governance.

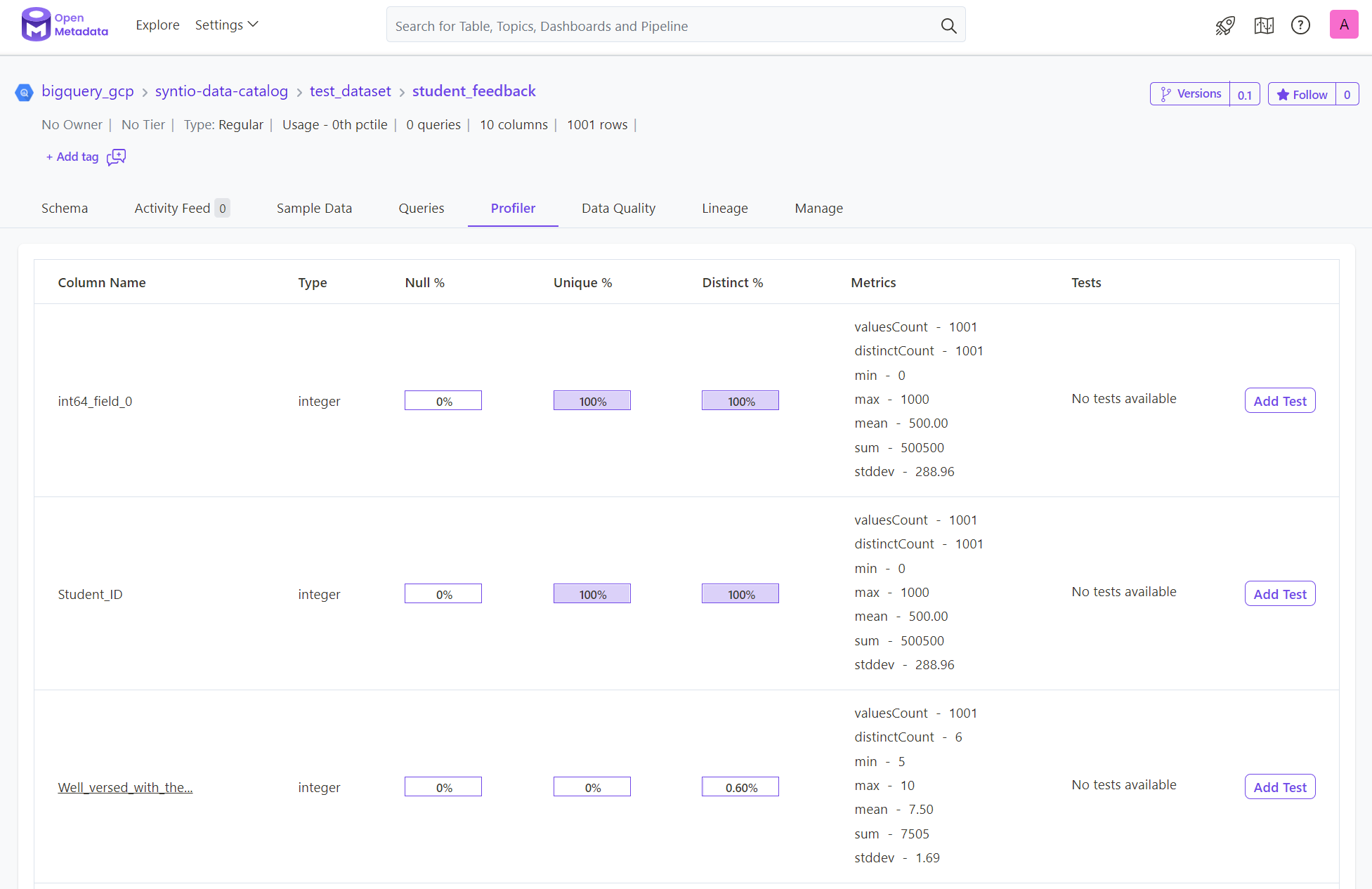

Data Profiling

In OpenMetadata, Data profiler is enabled as part of metadata ingestion, using a separate configuration file. Data profiles enable you to check for null values in non-null columns, for duplicates in a unique column, etc. You can gain a better understanding of column data distributions through the descriptive statistics provided. Some, but not all, connectors have existing Data Profiling ingestions.

Summary

After our brief research of OpenMetadata as a potential Data Catalog option that could be further built upon, we compiled a list of pros and cons:

PROS:

- Holds a big list of approximately 30 created connectors. Since not all were tested in this research, there is a possibility that some of them might be outdated or not work as expected.

- A very active Slack channel where current users of OpenMetadata can ask questions and seek help from developers that are working or have previously worked on the development of the tool itself.

- There are also meetings and demos of the latest releases from the developers explaining new features and how to use them.

- Most importantly, it is open-source and released under Apache License, Version 2.0. so can be used by anyone who is willing to roll up their sleeves and get to know this tool better.

CONS:

- The biggest issue would potentially be its (somewhat lacking) documentation. Not being up to date or the next steps not being explained correctly. This can cause issues with tools and software that the person has not previously worked with.

- Potential issues with some of the out-of-the-box solutions – they might not work as expected or at least, as hoped.

- In order to ingest some dummy data, the setup requires some development experience or help from data engineers, because the whole ordeal might be a little too much for a regular Business user who just wants to use the data within the Data Catalog. Even though the OpenMetadata promotes this easy way to ingest and implement connectors using User Interface (UI), in our research they had to be done manually with some development skills.

- Lastly, and most importantly, the connectors that are of utmost importance to us, S3 and GCS, have no out-of-the-box solutions, while Kafka has only metadata ingestion implemented but has potential for other features (like metadata profiler, lineage…) to be developed as well.

Previous blog posts from Data Catalog series:

Data Catalog and Testing Open Source Data Catalogs