Tihana Britvic, Ana Marija Galic

DATA ENGINEERS

This is our last blog on the topic of data governance and there is still lot to cover, so let’s get going!

In our previous blog posts, we talked about the basics of data governance, covering definitions, roles, and responsibilities. If you are new to this topic, we would recommend reading the first two parts here: Part 1 and Part 2. To refresh, data governance is a set of principles and practices that ensure high quality throughout the complete lifecycle of your data. Simply put, data governance is what you do when you have to have data that can be trusted, easily available, usable, integrated, and secure. There are few key components to achieve all this, and we will present them here.

What will we cover today?

- Data Quality

- MDM

- Data Catalog

- Data Labeling

- Data Lineage

DATA QUALITY

Data quality describes the accuracy, completeness, and consistency of data. It is the degree to which data is accurate, complete, timely, and consistent with all requirements and business rules.

Why?

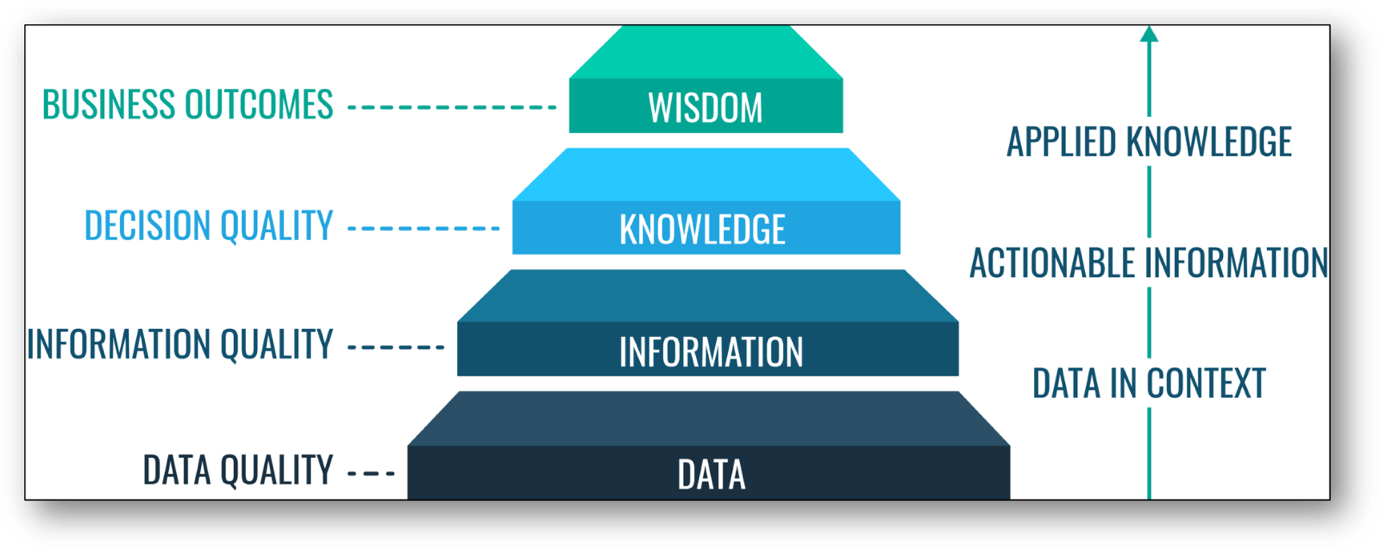

As can be seen on the picture below, data quality is the ground stone for quality in the information itself, which can be turned into knowledge that enables quality in decision making. That knowledge can then result in “wisdom” which is also known as good business outcomes. On the other hand, bad data quality can lead to wrong or risky business decisions, missed opportunities, and financial loss.

Data quality is a key ingredient for organizations to become data-driven, but what does it mean for data to be of quality? Well, there are multiple answers to that question, from the perspectives of the consumer to that of a business. We will mention the two most common ones here.

The first one states that data is of a high quality if it correctly represents the real-world entity it describes. The second states that data is of high quality if it is fit for the intended purpose of use. These definitions, as well as others, can often lead to disagreement between different parties in our organization. In that case, we use data governance to help them agree upon definitions and standards.

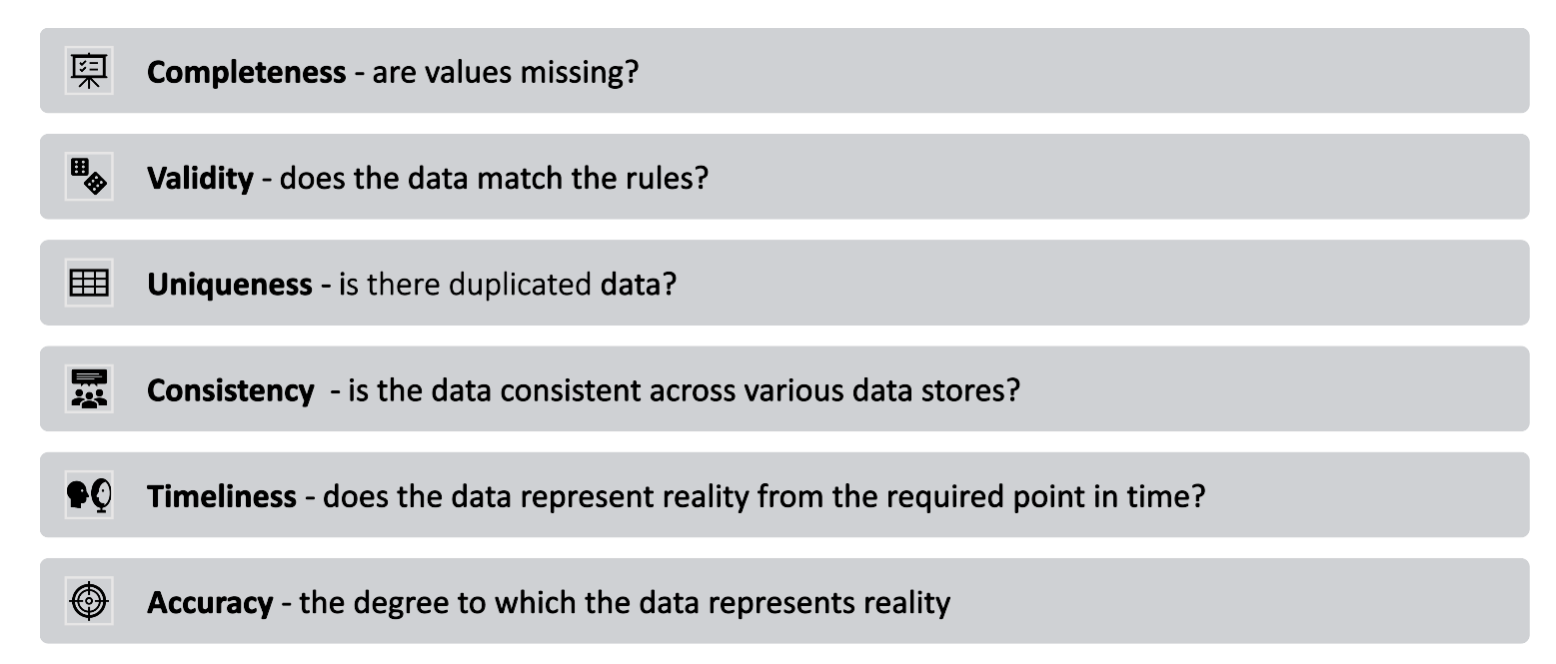

Evaluating data quality

To help you evaluate if data is of high quality, here is a list of 6 attributes and questions that you should be able to answer about your data.

MASTER DATA MANAGEMENT (MDM)

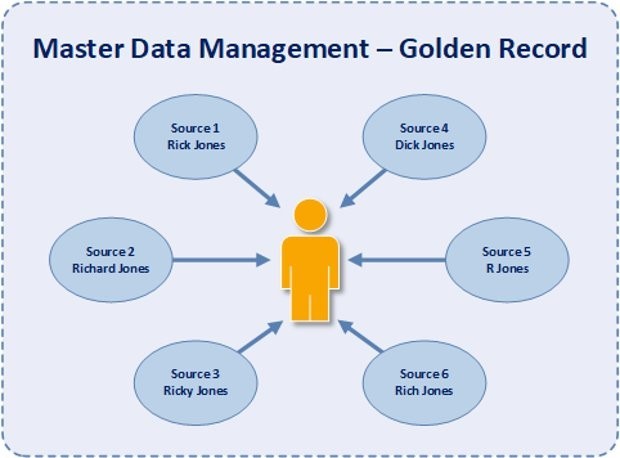

Let’s start by first explaining what master data is. Master data is the uniform set of identifiers and attributes that describe the core elements of the enterprise like customers, consumers, employees, vendors, sites, hierarchies, and much more. The idea is to create a “gold copy” which can also be referred to as the “golden record”. It is the single source of truth for a crucial data subject (i.e., customer) All other uses of that element must be compliant to that central or gold copy.

What is MDM?

A great definition taken from Gartner page says that “master data management (MDM) is a technology-enabled discipline in which business and IT work together to ensure the uniformity, accuracy, stewardship, semantic consistency and accountability of the enterprise’s official shared master data assets.” In other words, MDM makes sure that data is up to date and of the same value throughout the whole organization. We like Gartner’s definition, because it emphasizes all 3 key areas – it is something we (engineers) do to data, not manually but with the help of technology, based on input from the business department, and not by ourselves. Data engineers can design those systems, but probably do not know the sources or business logic well enough to be able to determine which one should be taken as primary one and in which case.

Why?

Each enterprise uses multiple applications and systems (i.e., ERP, CRM, …) for their everyday work. Ideally, each application or system is there to perform its own specific task, but more often than not, there are multiple systems being used for the same purpose, often due to historical reasons such as acquisition or merging of companies, or local government restrictions for global companies, etc. Meaning that the same entity instance can have the same attributes in multiple places or have different data scattered all over the place (like an employee who is also a customer), and not all data in all systems will be up to date. This can lead to major errors like propagating incorrect values or can even have an impact on customers and our business. A basic example would be sending the same marketing email or message to the same customer multiple times, having an item shown as on stock when it is not, or vice versa – showing the item as sold/out of stock when it is sitting on a shelf somewhere. Fragmented, duplicated, or out-of-date data also means that having basic KPIs or measures is impossible or painful. We are talking about answering questions such as “how many customers do we have?” or “how many X have we sold?” By implementing MDM for at least some entities (customer) we are lowering risks for error and improving our data quality.

DATA CATALOG

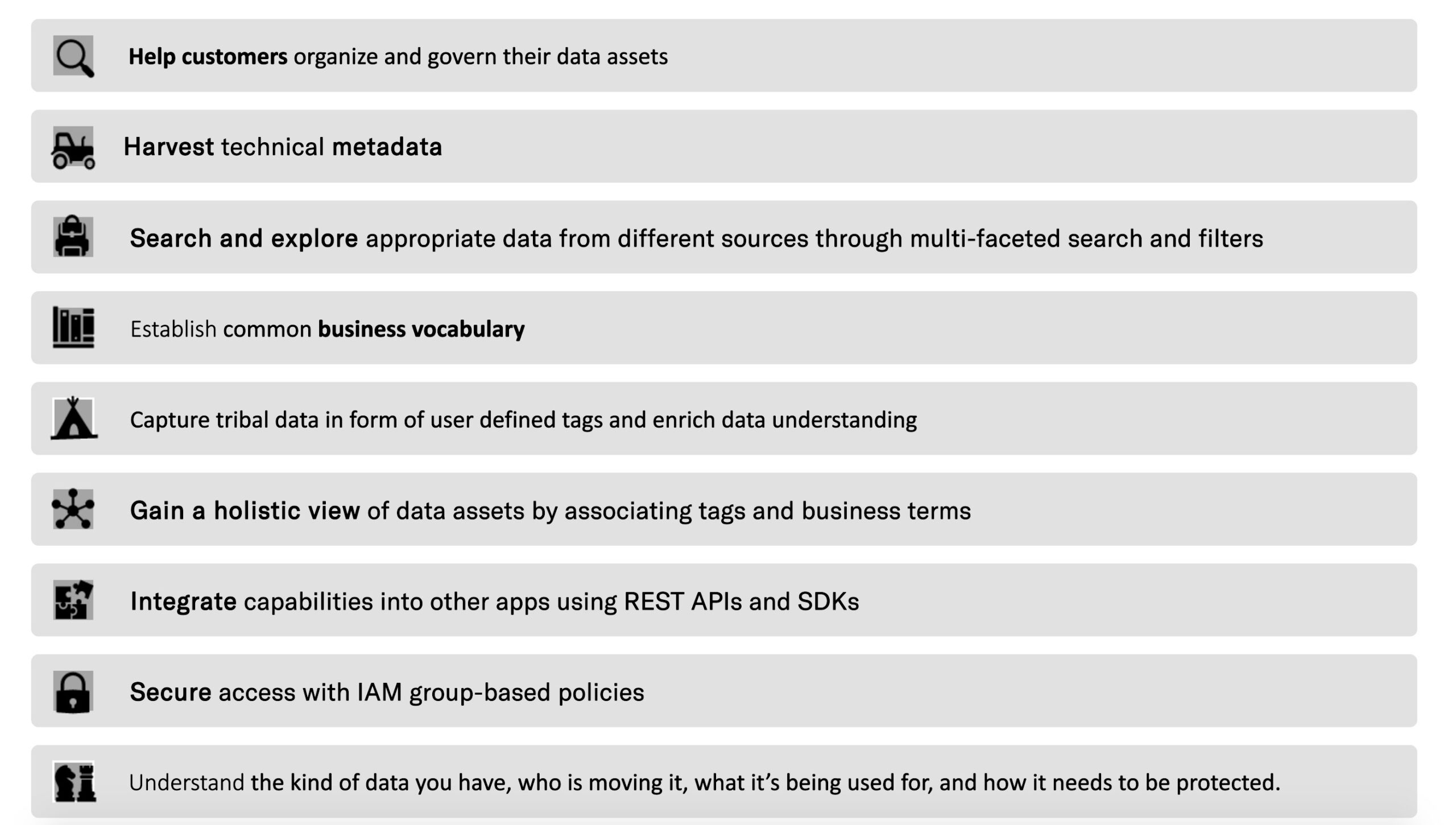

A data catalog is an organized inventory of data assets within an organization. It is a single collaborative solution that helps data professionals collect, organize, access, and enrich metadata to support self-service data discovery and data governance. There are two key words we would like to emphasize even further. The first is collaborative since it emphasizes the need for different areas of business and organization to work together on curating and explaining the data (data stewards), as well as supporting this collaboration (data engineers). The second is self-service which tells us that data should be documented in such a way that anybody in the organization knows where to find it and how to use it.

A data catalog uses metadata to help organizations manage their data. Metadata is “data about data” – it defines the content of a data object and tells us where the “real” data is and what it means.

Why?

Simply put, a data catalog should help you find the data, when the organization possesses high volumes of data. It sounds simple but in large organizations you may not be able to find data or even the data owner, which is a severe problem. It helps you understand what kind of data you have, who is moving it, what is it being used for, and how to protect it or access the data. Also, it enables you to stay compliant to different policies like GDPR, HIPPA and others. In addition, you can avoid putting too many layers and wrappers around the data and making it too difficult to use and therefore rendering it useless.

Data catalogs can be seen as a book catalog in a public library. When you go to a library and you need to find a book, you use the catalog to discover all the information you need to decide whether you want it, and how to find it (metadata). This catalog often covers all connected libraries – so you can find every single library in the city that has the copy of the book that you are seeking, and you can find all the details you would want on each one of those books.

DATA LABELING

Data (usage) labels provide users the ability to categorize data that reflects privacy-related considerations and predetermined conditions to be compliant with regulations and corporate policies.

Why?

Data labeling allows you to categorize datasets and attributes according to usage policies that apply to the data. Labels can be applied at any time, providing flexibility in how you choose to govern data, but the best approach is to label it as soon as it arrives. Labels are mostly used in medical purpose data (HIPPA), privacy policies (GDPR), credit card transactions (PCI DSS) and research datasets, for instance, data related to COVID-19.

DATA LINEAGE

Data lineage is the journey of data over time, from the source where it is created, through its transformations, to its final destination. Simply put, data lineage is knowing exactly where data is coming from and where it is going at all times.

Why?

Data lineage provides visibility by tracing errors back to the root cause in a data analytics process. It also enables you to re-do specific parts of the data flow for step-by-step debugging or regenerating lost output. It often uses visual representation to discover data flow or various changes, splits, or any other transformation (like parameter changes) it is going through.

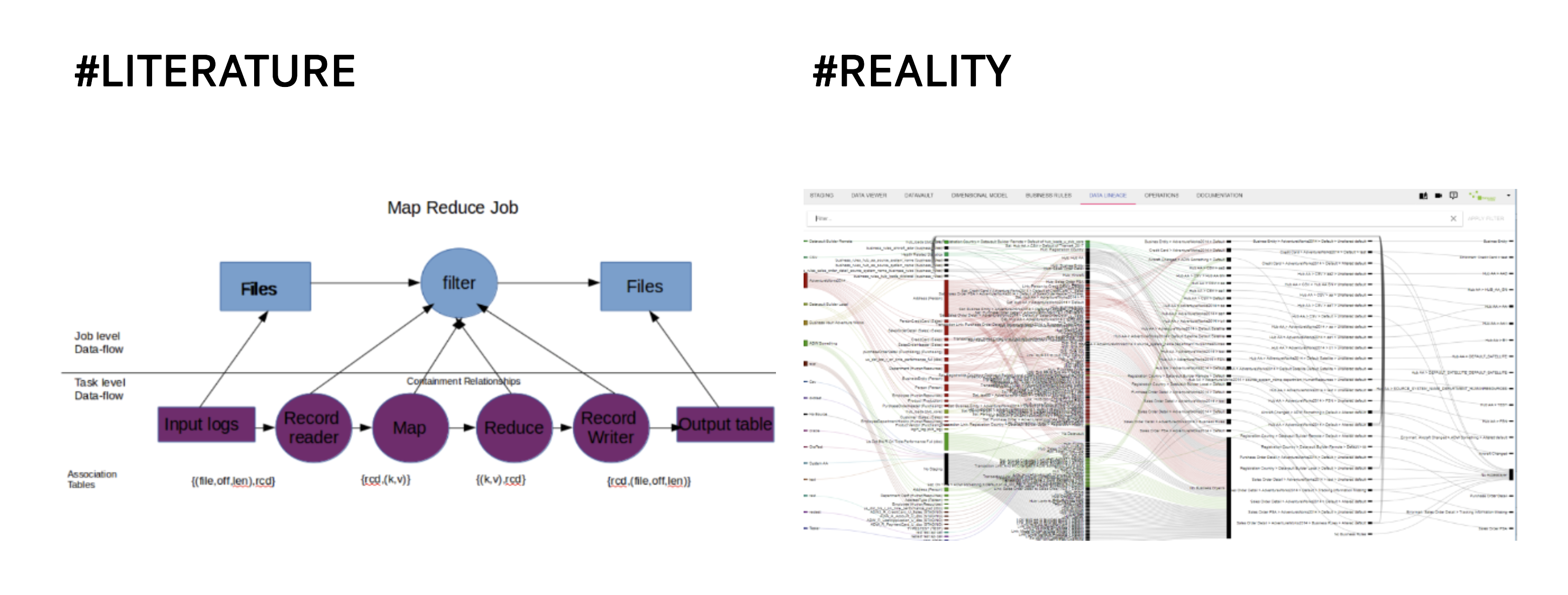

Data lineage is not a new idea. To a certain point, it already existed in old data warehouses where self-documenting tools were used like Informatica. Also, another big issue is a visual representation part. In theory, it looks great (pardon our pun), but in reality…. Well, just look at the picture:

Just like the rest of the topics covered before, in theory data lineage is a simple and straight foreword process, but the reality is different. As mentioned in our last post (*LINK*), there are some tools to help us with it, but the real question is, how much they can do for us? From our experience, close communication between IT and business personnel is always more important than tools.

CONCLUSION

Well, this concludes our series of Data Governance blogs. There is unfortunately no definitive way of doing DG, but we hope we have emphasized the important parts of governance, and what an engineer can do even if there is currently no central governance in your organization.

As always, even the biggest journeys start by taking the first small step, so we recommend just selecting a few areas that need to be worked on and start from there. You can document how to use the data, or where to find it, just as you would do in the data catalog. Also, make sure you promote your data through companies’ channels, so that people know it exists. If you are not using what can be considered as a sort of self-documenting tools (for instance Airflow or DataFlow in GCP), you can draft process in Draw.io. Yes, processes will change, and the documentation will stop being up to date at one point, but you will at least have something, until you implement lineage in some other way. Even simple changes in naming convention can help people understand what is going on, like naming all pipelines doing same transformation in a specific order in the same way, i.e., customer__01 (where ´transformation´ explains what is happening). We believe that when other employees see the effort, they will start to do the same, and in time, things will improve, and your enterprise will be on its way towards data governance.

Links and References

- Ladley John, “Data Governance How to Design, Deploy, and Sustain an Effective Data Governance Program”, 2012 Elsevier Inc.

- PROFISEE, DATA GOVERNANCE – What, why, how, who & 15 best practice

- PROFISEE, Master Data Managenent

- Wikipedia, Data Quality

- Erwin, What is Data Lineage

- Bi-insider, Master Data Management