Tomislav Domanovac

CHIEF DATA ARCHITECT

Message platforms are one of the main tools used in data object distribution and streaming data processing.

At the beginning of message systems, there were direct communications between two processes, a good example of that are Unix pipes. With pipe command, you would get two file descriptors so you can have one process writing data to stdout and the other process reading the data from stdin – let’s call that a direct communication channel.

Direct communication

Now, this very basic model does not allow for multiple producers to send messages, or multiple consumers to consume the messages. Its fault tolerance is limited and they assume that both producers and consumers are constantly available. This exclusivity of connection is not recommended when we want to distribute the data produced by multiple producers enabling multiple consumers, therefore not something to recommend when building data processing platforms.

A much better (and reliable) option is to send messages via message broker – a system that is built and optimized for streaming of messages and acts as a server component with both consumers and producers as clients. In this model, by introducing a central component, a broker, fault tolerance is much higher, since the message delivery does not depend on client behavior. Decoupling often helps in software architecture, and for sure in this case. There are also two interfaces in most of the message brokers – publisher and subscriber interface, which help data producers and data consumers to use the broker efficiently. In a nutshell, the producer publishes a message to the topic and one or many consumers subscribe to topics that hold the messages they want to consume. That means that all subscribers subscribed to a topic will get a message produced by a publisher (or publishers to that topic).

Generally speaking, message brokers do not store data forever, most of them delete the data as soon it’s been successfully delivered (consumed). Since storage cost is getting lower, creating a separate subscriber (consumer) that will store all messages in their original format is a good idea, since it’s much easier to replay or reprocess them from storage than to convince data producers to produce them again (often impossible).

Using message brokers will in most cases offer these three patterns.

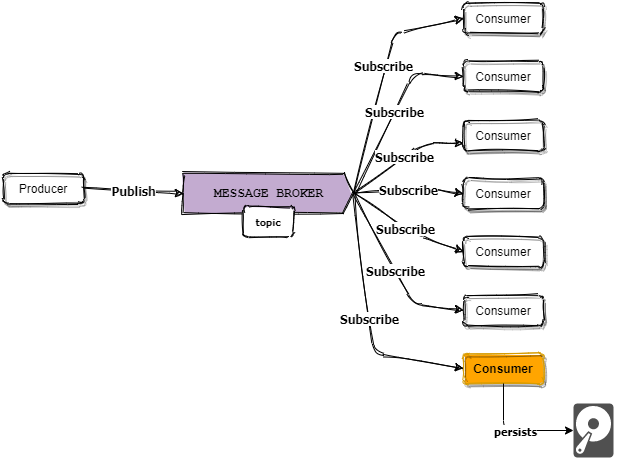

1. One producer, multiple consumers – let’s call it OPMC

What we can see here is that we use message broker as a sort of distributor. Each one of the consumers will have its own subscription to the topic where the data producer is publishing and can consume it in its own way and purpose. The typical use case for the OPMC pattern is when the producer is sending an elaborate business object which can be processed and interpreted in a number of apps, services, and analytic engines. That would mean processing the same message in a different context and with different requirements. When deploying cloud-provided broker services and consumers, performance-wise, it’s generally a good idea to keep as close as possible to the producers region.

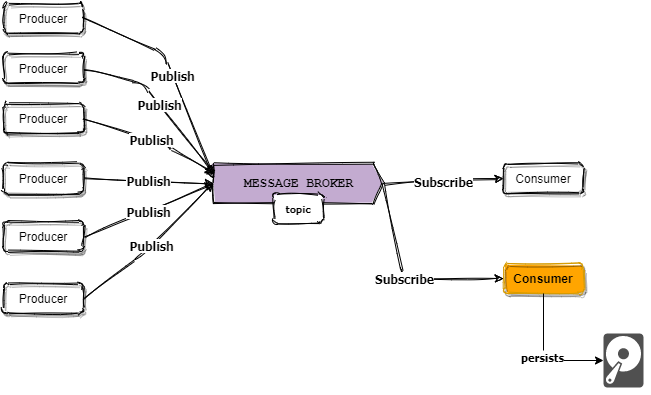

2. Multiple producers one consumer – MPOC for friends and acquaintances

Often used in IoT cases, referred to as a collector pattern. In those cases often we have one processing unit as a consumer, subscribed to a topic, and a large number of sensors or devices sending their data. In this pattern, the challenge is how to scale the processing unit in a way that it can handle sometimes huge amounts and high frequency of data.

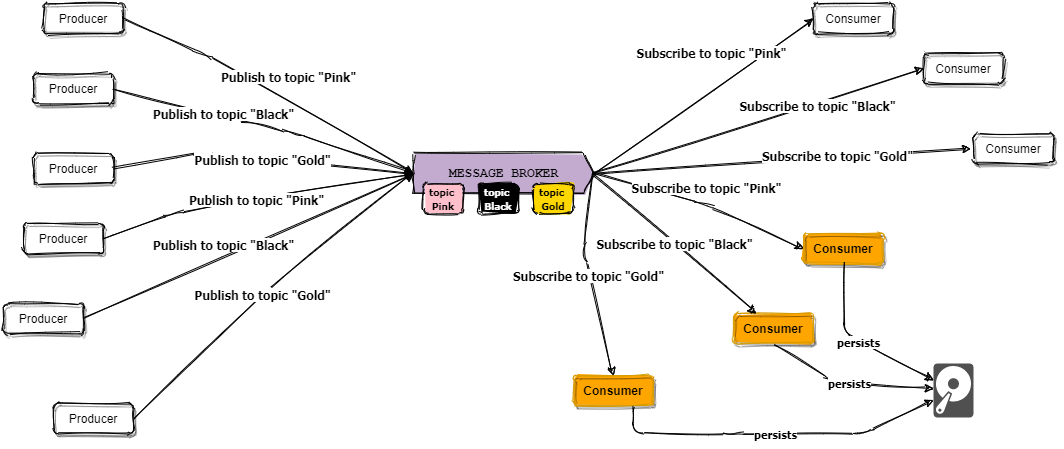

3. Multiple producers multiple consumers – MPMC short and sweet.

This pattern is something that is very common, and it is reasonable for message brokers to use topics as data feeds that are channels of communication between publishers and subscribers.

Important

Some message platforms use different notations than in this text. In some of them, only topics will be exposed to create publisher or subscriber clients, do not worry, there is always some kind of message broker system behind. That’s why I like to call message brokers a model, not a component name of some message platform.

Notice that in both patterns there is the smart and adorable orange consumer which can address unnecessary headaches with a simple task to offload and store the data from broker to permanent storage. Remember, once you consume the data, in most brokers and message platforms, it’s gone. That means if you had crashes in your data processing after you acknowledged the message – not great.

Conclusion

Message platforms are a fundamental part of your data integration infrastructure. They enable event-driven architectures, sharing and distributing data in real-time to every app that subscribes to a broker. They are an important part of moving away from centralized platform integrations and service-oriented architectures that are still ruling the enterprises.